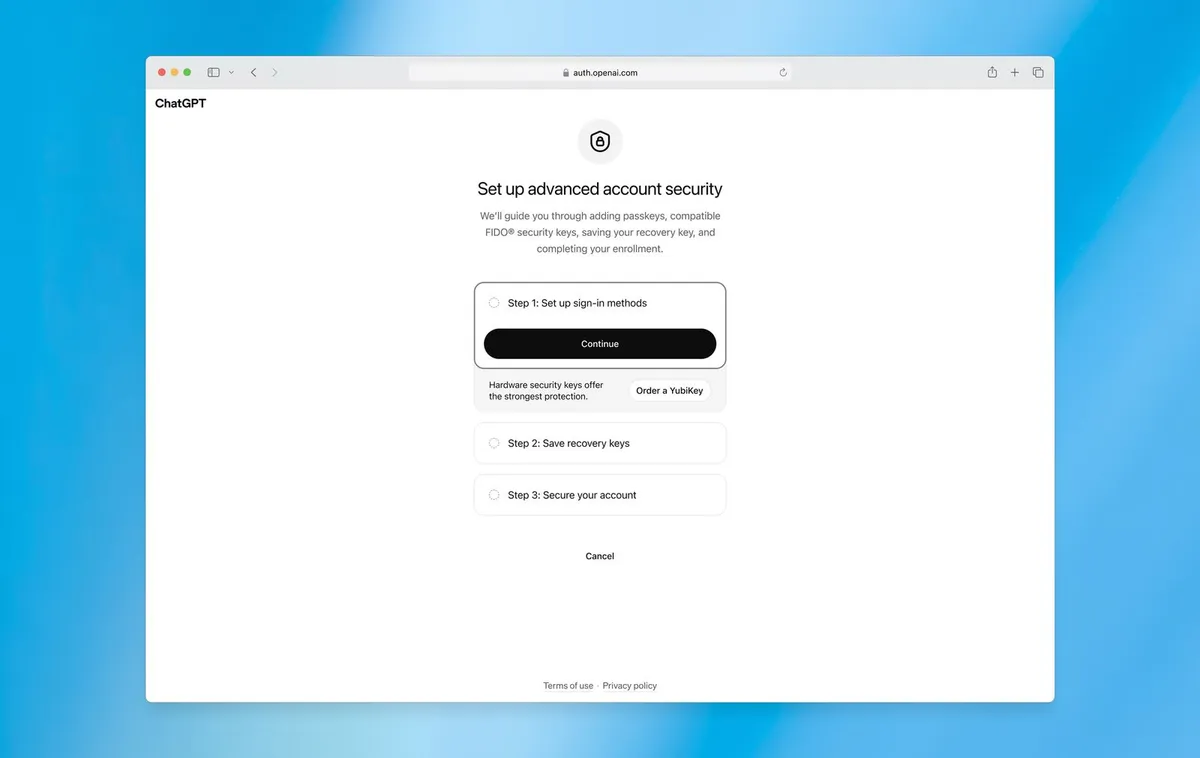

If you've ever worried about someone getting into your ChatGPT account - whether you're a developer, a journalist, or just someone who stores a lot of sensitive work in AI tools - OpenAI has been listening. The company is rolling out a new feature called Advanced Account Security, designed specifically for users who consider themselves potential targets of phishing attacks or account takeovers, according to a report from Wired.

Who is this actually for?

This isn't a blanket update that affects every casual user. Advanced Account Security is aimed at people with elevated risk profiles - think activists, executives, security researchers, and developers building on platforms like Codex, OpenAI's coding-focused tool. These are exactly the kinds of accounts bad actors would love to compromise, because the payoff is higher and the information stored is often more sensitive.

It's a smart move from OpenAI. As these tools become more embedded in professional workflows, they increasingly hold real intellectual and operational value. Your ChatGPT history might contain business strategies, code, client information, or research that you absolutely don't want falling into the wrong hands.

Why this matters beyond the tech crowd

Account security features used to feel like something only IT departments cared about. But in 2024 and beyond, AI assistants are genuinely personal - they know your projects, your writing style, your questions. A compromised account isn't just an inconvenience, it's a privacy event.

Phishing attacks have also grown considerably more sophisticated. With AI tools now capable of mimicking communication styles convincingly, the old advice of "just check for typos" in suspicious emails doesn't cut it anymore. Having a harder lock on the accounts that house your AI interactions is a genuinely sensible response to that reality.

What to expect

OpenAI is rolling the feature out to users who are concerned about being targeted - so if that sounds like you, it's worth keeping an eye on your account settings. The company appears to be positioning this as an opt-in layer for those who want it, rather than forcing additional friction on everyone.

It's a measured approach, and honestly the right one. Not every user needs maximum security mode, but the people who do really need it - and now they have a path to get it. If you're doing serious, sensitive work through any OpenAI product, this is worth paying attention to when it arrives in your settings.