If you've been following the AI space, you know the conversation has shifted from pure excitement to something more complicated. Now, a new development is raising eyebrows - and not a few alarm bells.

OpenAI recently testified in support of an Illinois bill that would significantly limit the legal liability of AI companies, according to reporting from Wired. The proposed legislation is notable for how far it reaches: it would restrict when AI labs could be held responsible for harm caused by their products, even in cases classified as "critical harm" - a category that includes mass deaths and large-scale financial disasters.

Why this matters more than you might think

It's easy to scroll past tech policy news, but this one is worth pausing on. The question of who bears responsibility when AI causes serious harm is one of the defining legal and ethical challenges of our moment. We're talking about real-world consequences - the kind that affect people's lives, livelihoods, and safety.

Liability law is essentially how society holds powerful actors accountable. When companies know they can be sued for damages, they have a strong incentive to make their products safer. Strip away that accountability, and the calculation changes.

The fact that OpenAI - one of the most powerful and influential AI companies in the world - is actively lobbying for reduced legal exposure is significant. It tells us something about where the industry's priorities lie as AI systems become more capable and more deeply embedded in everyday life.

The bigger picture on AI regulation

This isn't happening in isolation. States across the US are scrambling to figure out how to regulate AI, often moving faster than federal lawmakers. Illinois is one of several states where proposed AI legislation has sparked intense debate between tech companies eager to move fast and advocates who want stronger consumer protections.

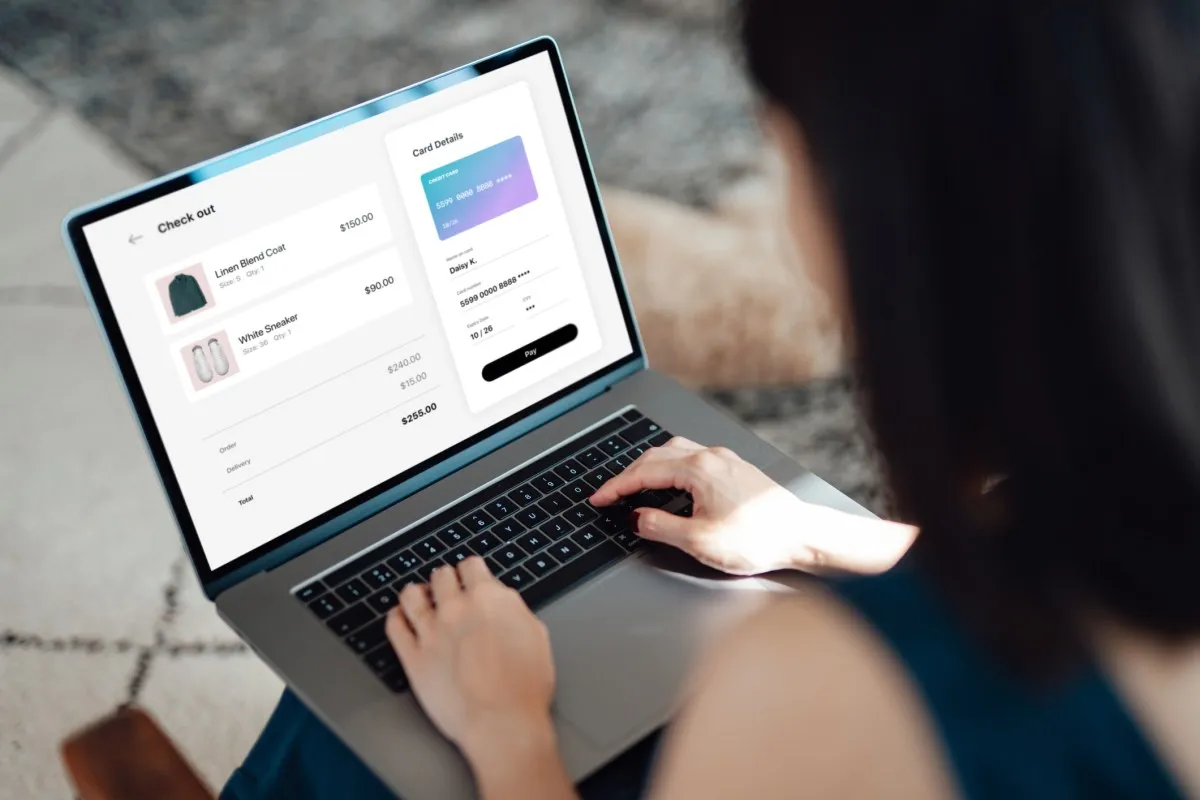

The tension here is real and not easily resolved. Overly restrictive liability rules could genuinely slow down innovation. But laws that give AI companies a near-blanket pass on catastrophic outcomes feel deeply uncomfortable at a time when these systems are being deployed in healthcare, finance, infrastructure, and more.

What to watch next

Whether or not the Illinois bill passes, this moment is a preview of fights to come. As AI becomes more powerful, the question of legal accountability isn't going away - it's going to get louder. Paying attention to who's shaping these laws, and why, is one of the most important things any of us can do right now.

For the full details on OpenAI's testimony and the specifics of the bill, Wired has the complete story.