We've all noticed the telltale signs: articles that feel slightly off, product descriptions that are technically correct but somehow hollow, blog posts that cover every angle without actually saying anything. AI-generated content has become a fixture of the modern internet - and according to a new study highlighted by Wired, its impact on the web is stranger than most of us expected.

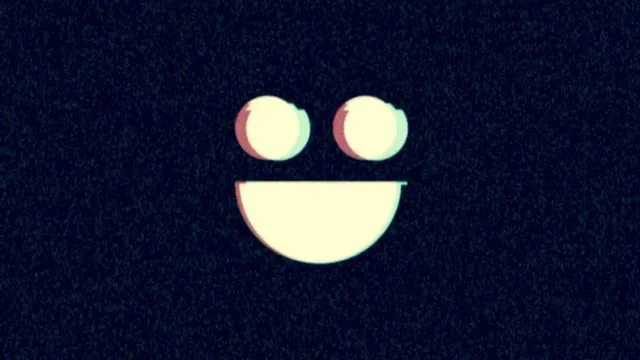

The internet is getting happier - artificially

The study found that the rise of AI-generated websites isn't just adding more noise to the internet. It's actually changing the emotional character of online content - and the shift is toward relentless, almost eerie positivity. AI-written content tends to skew upbeat, which sounds harmless enough until you consider what gets lost when a huge chunk of the web starts radiating manufactured cheerfulness.

Real human writing is messy, contradictory, and emotionally varied. It complains, doubts, jokes darkly, and tells inconvenient truths. AI-generated content, by contrast, tends to flatten that complexity into something blandly pleasant - optimized for engagement metrics rather than genuine expression.

Why this matters more than you might think

The problem isn't just aesthetic. When more and more of the content we encounter online is engineered to feel positive and reassuring, it can subtly distort our sense of reality. Search results, news aggregators, and social feeds increasingly surface AI-written material - and if that material is uniformly upbeat, we get a skewed picture of how people actually think and feel about things.

There's also a trust dimension here. Most of us have developed a reasonable nose for sponsored content or PR-speak. But AI slop - the term increasingly used for low-quality, mass-produced AI content - is harder to detect, and its artificial warmth can feel disarmingly genuine on first read.

The real cost of fake-happy content

One of the subtler consequences is what this flood of synthetic positivity does to authentic voices online. When AI content drowns out genuine human perspectives, the internet becomes a less useful and less honest place - a hall of mirrors reflecting a world that's tidier, happier, and less real than the one we actually live in.

The study's findings are a useful reminder that the conversation about AI and the internet needs to go beyond questions of misinformation and copyright. The emotional texture of the web matters too. An internet that's been smoothed into perpetual cheerfulness by algorithmic content mills isn't just annoying - it's a subtler form of distortion that's worth paying attention to.

So the next time something you're reading feels just a little too agreeable, too polished, too relentlessly fine - trust that instinct. It might be trying to tell you something.