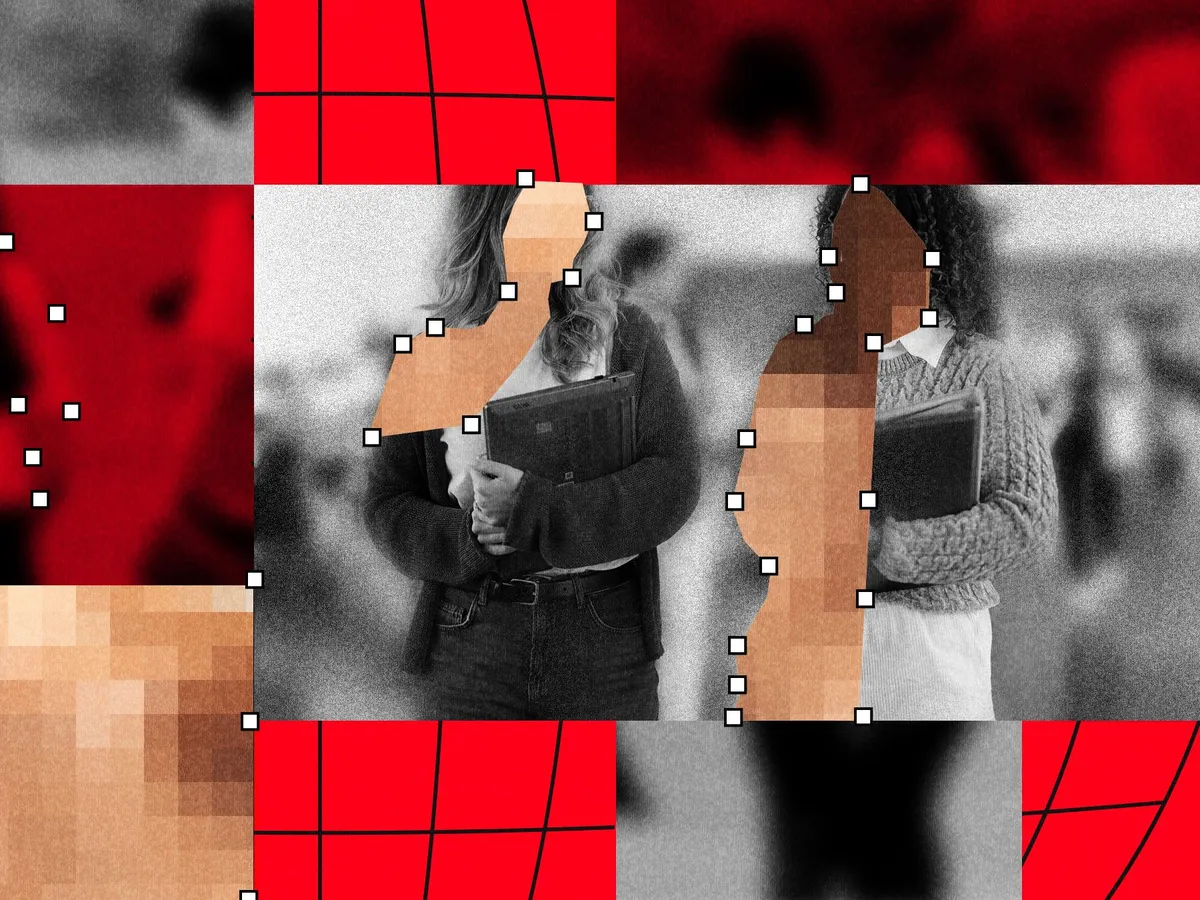

If you thought the problem of AI-generated deepfake nudes in schools was a niche issue affecting a handful of unfortunate cases, new research is about to reframe the picture entirely - and it's deeply unsettling.

An investigation by WIRED and Indicator has uncovered that nearly 90 schools and around 600 students worldwide have been caught up in incidents involving AI-generated explicit images. These aren't cases of traditional image theft or editing. This is so-called "nudify" technology: tools that can generate realistic fake nude images of real people using nothing more than a clothed photo.

Why this matters beyond the headlines

The victims are overwhelmingly young people - students who are supposed to feel safe at school. Having fake explicit images of yourself circulated among peers isn't just embarrassing or upsetting in the moment. Research consistently links this kind of image-based abuse to serious, lasting psychological harm, including anxiety, depression, and social withdrawal.

What makes this particularly alarming is how accessible the technology has become. You don't need technical skills or expensive software. These nudify tools are often free or cheap, widely available online, and require almost no effort to use. The barrier to causing real harm to a real person has essentially collapsed.

A global problem with no easy fix

The 90 schools and 600 students identified in the WIRED and Indicator analysis almost certainly represent an undercount. Many cases go unreported because of stigma, shame, or students simply not knowing what recourse they have. Schools and parents are often unprepared to respond, and laws in many countries haven't kept pace with the technology.

Some places are beginning to act. Legislation targeting non-consensual intimate imagery, including AI-generated versions, has been introduced or passed in several jurisdictions. But enforcement remains patchy, and the tools generating these images continue to operate in legal grey zones or outright ignore takedown requests.

What needs to change

This isn't just a tech problem or a school discipline problem - it's a societal one. Young people need clearer education about digital consent and the real-world consequences of this kind of abuse. Schools need actual protocols, not just vague acceptable-use policies. Platforms hosting nudify tools need to face meaningful accountability. And anyone affected deserves to know that what happened to them has a name, it's a form of abuse, and it's not their fault.

The WIRED and Indicator findings are a wake-up call that the scale of this crisis has been badly underestimated. The technology isn't going away - which means the urgency to respond to it properly has never been greater.